What a relief! Humanity is safe from AI! AI is not going to take over humanity. For a simple reason.

There is a business to be built, actually businesses, competition to be ridiculed and quashed, new products to be launched, new names to be thought of (no more LLMs – Meta), pricing to be perfected, investments to be carefully planned. With so many practical things to be accomplished, where is the time to take over humanity?

When human beings become customers, they cannot be allowed to be taken over. By anyone or anything!

The sarcasm is intended (as it normally is).

There really is no substitute for practical aspects of life as a source of sobriety, if not wisdom.

The numbers

As The Times of India reported on August 20, “OpenAI on Tuesday introduced ChatGPT Go, a Rs 399 per-month, plan built for India, directly pitting itself against rivals Perplexity, Google, and Anthropic’s Claude, which have all been pushing their premium products in the country”. It is GPT 5 that powers this initiative and supported by UPI payments. ChatGPT plus continues at Rs ,999 a month and ChatGPT Pro at Rs 19,900. Perplexity, which partnered with Bharti Airtel last month to provide its services for free to 360 million subscribers, charges Rs 1,660 per month for its Max tier.

Google’s Gemini is available in India at Rs 1,950 a month for its Pro plan and Rs 16,000 for its Max tier. Google’s Gemini is available for Rs 1950 a month for Gemini Pro and Rs 24,500 for Gemini Ultra. Anthropic’s Claude is priced at Rs 1,415 a month for Claude Pro and Rs 8,300 for Claude Max. (Source: The Times of India as above) As of mid-2025, OpenAI is generating roughly $1 billion per month supported by over 700 million active chatGPT users. Interestingly, there is a certain strategy at play here, reminiscent of The Economic Times repricing itself at Rs 2 per edition in the mid-1990s, staring with an invitation of price on Wednesday and expanding to the working week, transforming the business newspaper from a premium to a mass market business.

If these numbers are not enough to convince you, consider this comment from the Wired magazine in response to an article on OpenAI chasing a $500 billion valuation, which will make it make it more valuable than Elon Musk’s Space X – ‘Hypothetically, if ChatGPT hits 2 billion users and monetizes at $5 per user per month—“half the rate of things like Google or Facebook”—that’s $120 billion in annual revenue.’

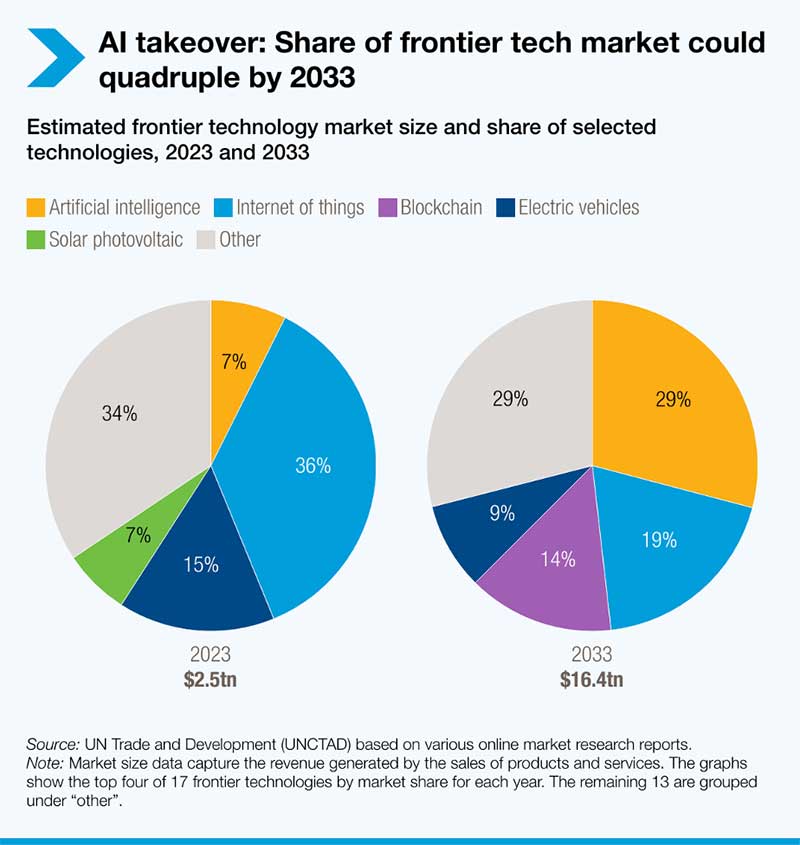

UNCTAD has published a reports outlining the tech market captured in this image.

Range of investments, Renting infrastructure

Readers of this page will be familiar with my repeated warnings that AI is not some single unified technology but a composite of multiple technologies. Trveor Wagner, Director of the Research Center & Chief Economist for the Computer & Communications Industry Association, has in elaborate detail outlined the sort of investments that underpin making AI a business: AI tools & applications, AI Model training, Data Centres and Cloud services, Semi-conductor design and fabrication. “AI is not a single monolithic market; it is a family of technologies operating at different but related layers, from chips and data infrastructure to algorithms and end-user applications. At each layer of this “AI stack,” multiple firms – incumbents and startups alike – are vying for advantage. Competition is intense across the entire AI stack”.

As I have argued time and again, there is no tech business without data centres; they are the foundational infrastructure. Sam Altman himself more than hinted at trillion dollar investments in infrastructure (https://scanx.trade/stock-market-news/global/openai-s-altman-unveils-trillion-dollar-ai-infrastructure-plan-hints-at-future-ipo/16829691). In fact, he also said they could be renting out their infrastructure imitating Amazon – readers will recall this is how Amazon built a business in web services by setting up AWS to rent out its computing power. Google followed later. Management consultants had coined the phrase ‘co-opetition’ to refer to situations where businesses both compete and collaborate or at least become customers of competitors. This is especially true in the business of technology where investments in infrastructure normally is in excess of current and even future demand, thus creating ‘surplus capacity’ that can be a source of revenue.

It is well established now that cloud services is a huge business; more important it is a business by itself in the sense that someone could do nothing but build a business in and around data centres. Or, with data centres, as the basis, offer cloud services. Or simply rent them out. Many tech businesses, especially those that need to be careful about using capital, will prefer to use their capital for their principal business rather than invest in infrastructure because it can be hired anyway. For example, Meta Platforms has signed a six-year cloud computing services deal with Google, The Information reported late Thursday, citing sources. Meta will tap Google Cloud services for its artificial intelligence workloads and more, according to the report (https://www.investors.com/news/technology/google-stock-apple-stock-gemini-siri-iphone-agreement/). It is obvious that we will see a spate of such contracts being signed.

The chips

The addition in the business of AI are chips. Apart from data centres, chips are the foundations of this business. Making chips is an exacting business, technologically challenging even for the tech majors simply because it is a very different kind of business. It is debatable whether these companies have the intellectual capital to compress the maturity cycle to measure up to the stringent standards of quality and performance. Wagner points out that “major cloud companies like Google, Amazon, and Microsoft are investing heavily in in-house AI chip development, from Google’s TPU accelerators to Amazon’s Inferentia and Trainium chips”. (Such disintermediation has often been a part of the tech business.) The presumed advantage of this vertical integration is not just that they are not dependent on a single GPU but that they can build a GPU which is in sync with their planned offerings, similar to ASIC (application specific integrated circuit), unless they intend to also business in chips. Perhaps avoidable, as it will be a drain on management resources.

Moreover, with many VCs (venture capital) pouring funds into chip start-ups, we really don’t know if a glut will emerge in future. Perhaps more important, what is the guarantee that chip technology itself will not change; if it did, it might well call for a different kind infrastructure investments. We must recall the prescience of Peter Drucker who questioned decades ago investments being made by the auto industry premised on an unchanging engine technology. Well before the advent of hybrid and EVs, Drucker warned how engine technology will change and how that by itself will shape the future investments in the auto industry.

There is a simple rule to follow: always pay attention to the pivot of any business or technology, by keeping a watch over potential disruption, and not get misdirected by the peripheral, however attractive they are. Now.

We will keep exploring this space.